Last week we discussed how doctors perform medicine, and what parts of the process are worth automating. It turns out that deep learning is a very good match for some of the most time consuming (and therefore costly) parts of medicine: the perceptual tasks.

We also saw that many decisions simply fall out of the perceptual process; once you have identified what you are seeing or hearing, there is no more “thinking” work to do. In fact, the answers these systems arrive at can be superhuman. The head of the computer vision research lab where I study recently wrote this:

“In situations where the only information required to make the decision is in the signal itself, machine learning wins by a small margin.”

Professor Anton van den Hengel

It turns out that quite a large subset of medical tasks are like this, which we will explore in more detail today.

To begin with we should recognise that automating a subset of medical tasks is not the same as automating all of medicine. There are lots of things doctors do that are not perceptual or that require additional conscious thought. So if only parts of medical jobs can be automated with this technology, what exactly are we talking about when we say “deep learning could replace doctors”? To understand this we need to delve into what automation is and how it affects workers. Hopefully we can clear up some common misconceptions along the way.

Standard disclaimer: these posts are aimed at a broad audience including layfolk, machine learning experts, doctors and others. Experts will likely feel that my treatment of their discipline is fairly superficial, but will hopefully find a lot of interesting a new ideas outside of their domains. That said, if there are any errors please let me know so I can make corrections.

Second disclaimer: Sorry, but I broke one of my self-imposed blogging rules and wrote another long one. Today’s article is just over 3800 words. In my defense, I got very distracted by the chess section. Given the length of this post, I am going to include a “summary” box at the bottom to help the tl:dr crowd 🙂

Automation is not about jobs

A general definition of automation is the replacement of human labour with non-human effort.

Note that the word “job” is not part of this definition. A job is a combination of various related tasks. Automatic systems are very narrowly focused on specific tasks. They do one thing really well, but can’t really do anything else. Even with deep learning we struggle to teach individual neural networks more than a couple of closely related tasks.

So automation is not the replacement of human jobs, rather as economist Daron Acemoglu says:

“Automation is the replacement of tasks previously performed by labor”.

This idea has also been popularised by Richard and Daniel Susskind in their book “The Future of the Professions: How Technology Will Transform the Work of Human Experts“, where they talk about how any complex job can usually be decomposed into multiple simple tasks.

This distinction between jobs and tasks appears to have caused a lot of confusion, because many people seem to think that automation is an all or nothing process. A very common argument against medical automation is that “machines can’t do …”, where the blank is something like empathy, or surgery, or communicating with other health professionals. These arguments seem to assume that unless a machine can do everything a doctor can, that doctors are not at risk from automation.

This is not the case.

Jobs have only ever been partially replaced by technology (i.e. we only ever automated tasks). Let’s have a look at a few examples to understand what I mean:

- Steam train conductors replaced horse-drawn vehicle drivers for moving large quantities of goods between fixed points.

- Highly skilled metalworkers weren’t needed on assembly lines, which allowed novices to perform simple tasks.

- The horse-drawn plough, combine harvester and modern automated farming equipment have massively reduced the need for human agricultural labour.

You can see that the jobs didn’t actually disappear. Even today we still have handsome cab drivers, metalwork artisans and fruit pickers. But we have seen many of the original tasks disappear, and we lost many jobs with them because we needed less people to do the work that is left over. Agriculture is a great example, because the progress of automation is well characterised.

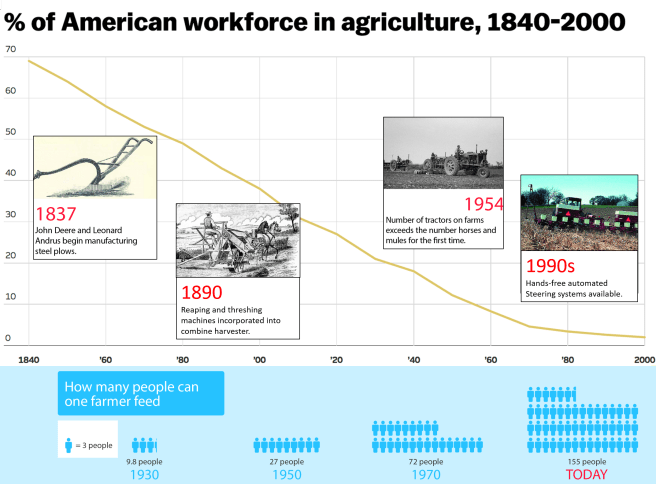

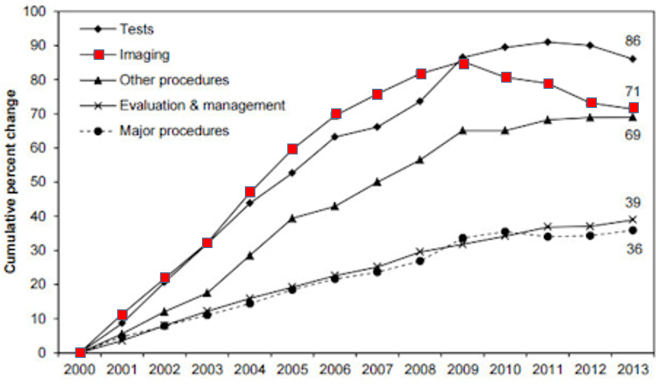

This just happens to be a US graph, the trend is similar across the world

At any given year in this graph, the innovations could only replace some tasks. For example, the mechanical reaper in the mid 1800s was said to replace the work of five men. Consider what that means: reaping is a single task, performed periodically. Commodity crop farms still needed to bind, thresh, plant, and weed. Farm work was not replaced wholesale. But jobs still disappeared.

Instead of thinking that five jobs got automated per reaper, instead we see that five jobs worth of labour tasks were automated. The result in this example is the same though, five farm workers had to move to the city to work in factories.

It is not always the case that an equivalent number of jobs disappear, which I think is a source of confusion. To understand this variability we need to use a new word; disruption.

Disruption is a term that can be used to describe the impact of automation. In this context, we are talking about the impact on workers.

There is a lot of variability in the impact of automation on jobs. One automated task might have very little effect on a workforce (like MYCIN), where automation of another task may have a big impact (like the mechanical reaper).

The second example is highly disruptive, whereas the first is not.

There are two major factors that predict how disruptive any given automation process will be.

- The percentage of work-hours in a given job that are invested in the task. The more of a job taken up in the task being automated, the less labour is required to maintain the same level of production.

- The flexibility of demand for the product from that job. If demand can increase as prices fall, then more jobs are created to complete the remaining tasks. We have seen this in the clinical chemistry services mentioned last week (where an 80% reduction in cost per test only resulted in a 25% reduction in jobs), as well as in farming, where the demand for food increased several-fold as the population grew.

The massive increase in demand for food at least partially mitigated the jobs losses in agriculture.

We can even turn this into a pseudo-equation (which is clearly a massive oversimplification):

J = T − D

- J is the percentage of jobs disrupted by the automation of a task.

- T is the percentage of work-time taken up by the automated task.

- D is the percentage change in demand over the time period P.

- There is some scaling factor that makes T more important than D. We are trying to keep this simple, so let’s just ignore it!

Using this simplified formula (which I have titled “how to make economists weep”), if a task is automated that fills 50% of work hours, but demand increases by 25%, then only 25% of jobs are disrupted. Again, this isn’t actually true, but it is a nice way to think about the forces that balance the impact of automation.

The idea that we automate tasks rather than jobs leads right in to the next major misconception; that humans and machines can work together without disruption.

Augmentation is not a thing

People say that “humans will work with machines in partnership”, as an argument that automation need not be disruptive to workers. They often call this augmentation rather than automation, suggesting the machines are only helping the human perform their job. Most often this is framed as the human in a supervisory role, like a farmer who operates a harvesting machine. While this idea is really widespread (I could link to dozens of major articles), it doesn’t really make sense.

If we accept the idea that automation is the replacement of tasks, then “augmentation” is not distinct from automation. A human retains some tasks (like driving) and the machine performs other tasks (like physically reaping the wheat). The human has been augmented, allowing them to perform more work in a day. But in doing so they have replaced the many workers who were previously needed to reap the field.

Alternatively, some experts like Daron Acemoglu think that there is a distinction between enabling and replacing technologies, but that we are now firmly in the “replacing phase”. He also shows that automation lowers wages, which shouldn’t happen if the technology only makes humans more productive. There are a variety of complicated issues around quantifying the macroeconomic effects of automation, and watching Daron’s whole talk is worthwhile if you are interested.

Another problem with the idea of augmentation is that it is easy to understand what the human contributes when driving a tractor. It is less clear what a human can contribute to a system that excels at ‘cognitive’ tasks, and we might find that humans are not suited to work with some artificial intelligence systems. For example, level 2-3 self-driving cars appear to be more risky than full automation, as humans struggle to maintain the constant vigilance necessary to respond when the car decides it needs help.

There is a counter-argument here though. Some people seem to think that humans and computers working together are almost always better than computers alone.

There is one main piece of evidence that gets regularly cited:

Humans have lost to computers at chess for decades, but hybrid* human/computer teams** beat computers alone.

The idea is that humans and machines have different skillsets, and working together they are better than the sum of their parts. I believe this idea was first introduced by Gary Kasparov, and then popularised by Tyler Cowen in his book “Average Is Over“. It has since been repeated by many other authors including Erik Brynjolfsson and Andrew McAfee in “The Second Machine Age“. Both books have been highly influential in the discussion on automation, and this idea has been repeated over and over again in dozens of articles on automation in major news outlets.

But where is the evidence that human/machine chess teams are better than computer teams?

Most of it seems to come from studies performed in 2007/2008 on a fairly limited dataset. On Cowen’s own blog in 2013, he questions whether the era of hybrid teams was over. I am going to say straight out that I don’t understand the results very well (they require quite a deep understanding of chess) so I won’t pass judgement on them. But on a more meta level there are two things that strike me:

- hybrid teams were only competitive when humans were given a long time to consider their moves. Games with stricter time limits are completely dominated by computers alone.

- the major “in” that human players described around this time in forums etc. were idiosyncratic weaknesses in chess engines to certain move combinations.

Trying to find out more has been strange – there is almost nothing in the last few years anywhere on the net. Many of the advanced chess tournaments have died out, along with several websites and forums. All I find when I look up the topic is popular tech articles proudly proclaiming that hybrid chess proves human/computer teams are just the best.

But I did discover a hidden treasure trove of data in the form of correspondence chess, which reveals that all is not rosy in the world of chess hybridisation. Correspondence chess is played via … correspondence, obviously. It occurs over long time scales (often days per move), without real time or real world interaction. Since there is no way to tell what your opponent is doing (or to ban chess computers), this became a prime venue for hybrid chess.

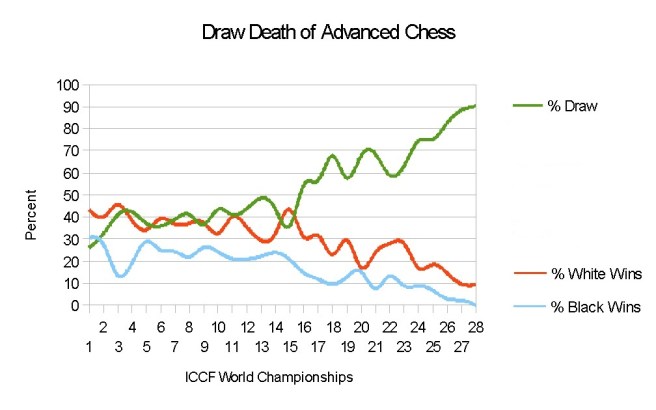

What we see is that in world championships, the draw rate skyrocketed.

Advanced chess is another name for hybrid chess*

As you can see, there was a big change around the 15th world championship, which coincides with the 1990s/2000s. Unsurprisingly, this is also when chess computers became widely available.

These days about 95% of games end in draws (100% in the latest German regional tournament!). One blogger suggests:

“The inevitable march of the machines toward 100% draws doesn’t leave much room for significant human contribution.”

Tansel Turget, a corresponence chess grandmaster, said in 2015:

“Until recently, human could add 200-300 points to the strength of the chess engine, but this additional human input is decreasing with the improvement of chess engines.”

Presumably the situation has worsened since then, as the draw rate has gone from the mid-80% range to nearly 100%.

Even worse, this blog asserts that analysis of the games that have been made public from these tournaments has shown that nearly 100% of the moves match the recommendations of top chess computers. And as a final, “human” element to this story: “boredom” is cited as the leading reason for players quitting hybrid chess.

The only “how-to” guide to hybrid chess on the internet openly states (slight paraphrasing):

“Do not “second guess” the engine or start thinking you can play better moves. The only time you need to think for yourself is when the engine cannot decide between equally good moves.”

At the very least I think the argument that “human/computer teams will always be relevant” is unsupported by the experience in chess. It seems more likely that there is a transition period where systems with identifiable weaknesses can be augmented by human assistance (this “brittleness” was much more of a problem before deep learning, and has not been described with DeepMind’s AlphaGo).

We might get some new data in the future, because DeepMind is planning a new set of Go matches this year (with modern AI technology), including several matches with hybrid teams. But honestly, it seems like this whole hybrid chess thing was never the world-changing insight it has been portrayed as.

What does this mean for medicine?

So, I’ve hopefully convinced you that any level of task automation or augmentation can be disruptive. To understand what automation might look like in medicine, we need to think about the two major factors I described above.

We started by acknowledging that deep learning is suited to perceptual tasks, but other sorts of tasks may not be easily automated. This will give us our bounds on how much of medicine is at risk of automation.

Factor 1 – The tasks of medicine

Medicine is roughly made up of:

- paperwork

- perception (look, listen, touch)

- physical intervention (surgery, procedures)

- decision making

- talking (to other doctors, to patients)

- research, teaching etc.

For the purposes of this discussion, let’s ignore paperwork. While it is probably the biggest time-sink for most doctors, the automation of paperwork for an AI system that can already perform the other tasks is more of an engineering challenge than a theoretical one. The paperwork (kind of) comes for free.

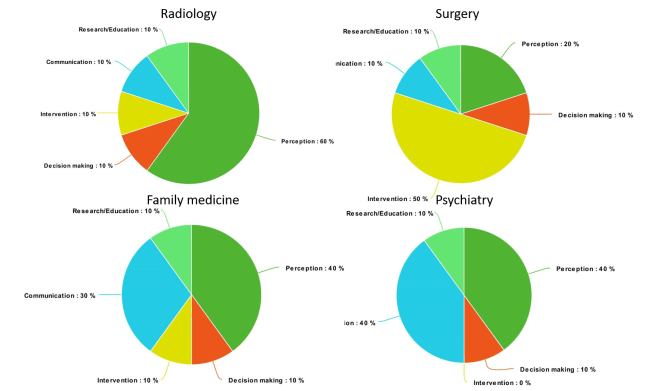

The amount each task makes up of the whole job varies by specialty, and there is even a lot of variation within specialties, but I am only trying to do ballpark estimates. Feel free to disagree with me and drop a comment here or on social media, I’m happy to update these plots if I need to.

As a preemptive apology to all you data-viz people, I am sorry but I am going to use pie-charts. I couldn’t think of a better visualisation for this. I did resist making them 3D, or into doughnut plots 🙂

A rough estimate of the per-task contribution to various medical jobs (pies via Meta-Chart)

It might not be perfect, but it seems about right. Radiology is probably very similar to pathology, and most of internal medicine is somewhere between family medicine (which is actually called general practice in my country) and surgery.

Here is the key point. If we accept that perceptual tasks and decision making are at risk of automation in the near to medium term, then our charts look frankly terrifying, even for the specialties we weren’t really worried about before.

AAAAAARRRRRGGGGHHHH!

We can see that a huge chunk of the tasks of medicine could be automated, if deep learning works like Prof Hinton expects (remember that we haven’t actually accepted that yet, and we will be exploring that topic in a few weeks).

Factor 2 – The flexibility of demand

We saw in our equation that automation can (and often is) offset by increased demand as the price of the product drops. So the question is, how flexible is the demand for medicine?

First up, we have to understand there are two sorts of medical systems in the world: the developed world (with nearly universal coverage), and the developing world (with nowhere near enough access to healthcare). Each system will respond differently to cheaper medical tasks.

We also have to recognise that medicine is not monolithic. Different specialties will have different growth potentials, but let’s use radiology as an example. I know radiology best, and I think it reflects general trends reasonably well.

In the developing world, a sudden drop in costs would be revolutionary. There is a huge unmet demand, limited by access to doctors, technologists and technology. So in these countries, we could see a sudden increase in demand to offset the drop in radiologists’ workload.

We can see there is hugely unequal access to radiology services. This is data on the number of CT scanners, but the same patterns are seen with other measures of access.

In the developed world, this doesn’t appear to be the case. For many decades the growth of imaging demand outstripped the growth of radiology as a profession (with a ratio of about 3:1), but this trend appears to have stopped or even reversed. In fact, for the last seven or eight years, the number of imaging studies performed per year has fallen.

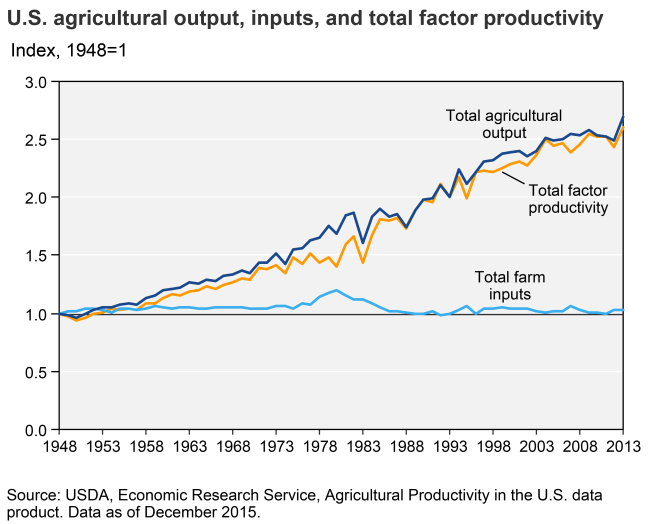

Can anyone believe it was not long ago that journals only accepted black and white figures? A splash of colour improves any plot!

No-one quite knows why this is. There were some big studies that came out around 08/09 that warned of the radiation risks of CT scanning in particular, so that might have played a role. But it is entirely possible that our healthcare markets have reached “peak image” (especially because this trend is seen in all OECD nations).

The point is, developed nations might be very close to the maximum utilisation of medical services. There are some exceptions (like waiting lists for “elective” surgery in the NHS) but in general if someone needs to see a doctor they can. Most OECD nations don’t even charge the individual for that privilege.

So demand might not increase as prices fall, which makes the piecharts of doom even scarier. If 70% of radiology is at risk of automation, and demand for images is falling 2-5% per year? We are talking about unprecedented disruption.

I say unprecedented for a reason. The evaporation of 90% of farming jobs occurred over two centuries. The loss of 25% of jobs in clinical chemistry took 50 years. For a more contemporary example, manufacturing in the developed world has halved in 30 years.

If we are looking at 70% of radiology disappearing in a decade or two, then not only is it faster than ever before, but it is also the first major disruption of the highest skilled section of our workforce. It takes over ten years to train a radiologist from the start of college/university.

This timeline is a big part of what Prof Hinton is talking about. There are people starting medical school today that intend to become radiologists in the early 2030s. That is a long way out to be making predictions about these job markets, so in the coming posts we are going to explore the factors that make the medpocalypse scenario more or less likely.

Before we go, I will try to answer one more thing: assuming any decrease in demand for radiologists will first result in the reduced employment of new radiologists (rather than the firing of working radiologists), how much automation do we need before Prof Hinton is right and “we should stop training radiologists”?

A recent census (pdf link) in the UK shows that the radiology workforce grows at about 2-3% per year. While the UK College does not appear to have statistics from before 2010, in Australia a similar census showed 7-10% growth between 2004 and 2012 (remember that imaging demand was growing until about 2009, so this change in growth rate makes sense).

The same censuses (censi?) suggest a retirement rate of about 1-2% per year. About 30% of radiologists in Australia and the UK are trained overseas. If we combine these three numbers we see that about 4% of the workforce each year is newly graduated radiologists, being generous.

So deep learning has to displace about 5% of radiology work per year at a minimum to reduce the need for new radiologists to zero. If Prof Hinton is right, this is the tipping point.

On one hand, that is pretty reassuring. Displacing 5% of a workforce in a year is a big deal, and doing it year on year for long enough to change College training policy is even more remarkable. Even the worst case scenario, where 70% of all radiology tasks are displaced, if that takes more than 14 years we will still need to be training radiologists.

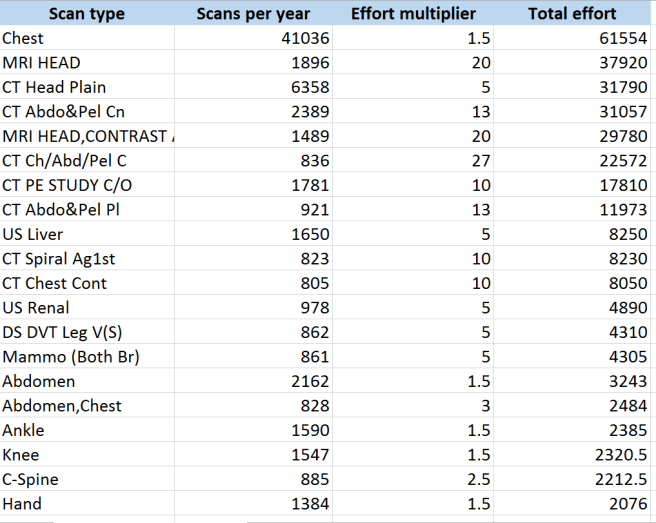

But at the same time, a lot of effort is localised in a few tasks. In fact, about 60% of the diagnostic work radiologists do at my hospital is in three studies (chest x-rays, head CT and head MRI).

The “effort multiplier” comes from UK College of Radiology research. I always consult this chart when I am considering new AI projects in radiology.

Just by focusing on those three groups of tasks alone, there is a lot of scope for much greater than 5% displacement in a reasonably short space of time. Easier said than done, though.

Next week we will be talking about the major barrier to disruption that most experts and pundits bring up in these discussions, and that is medical regulation.

See you next week.

SUMMARY / TL:DR

- Automation replaces tasks, not jobs. How much time the task takes a human determines how many jobs are lost.

- Machines that “help” or “augment” humans still destroy jobs and lower wages.

- Hybrid* chess does not prove that human/machine teams are better than computers alone. STOP SAYING THIS, tech people!

- Deep learning threatens tasks that make up a terrifyingly large portion of doctors’ jobs.

- In the developed world, demand for medical services may be unable to increase as prices fall due to automation, which normally protects jobs.

* I absolutely refuse to call these teams “centaurs”. Maybe it is just the fantasy nerd in me but unless you have physically merged your lower body with the computer, don’t even.

** Is anyone else really very impressed that I found a picture of a machine/doctor hybrid playing chess?

Posts in this series

Understanding Automation

Next post: Radiology Escape Velocity

“Is anyone else really very impressed that I found a picture of a machine/doctor hybrid playing chess?”

No, I expect Google Image Search did most of the work for you 😉

This is a very good post, with some clear writing and clear thinking that go way beyond being relevant for medicine.

LikeLike

Thanks for a very informative and enlightening post! Looking forward to the rest of the series.

Don’t know if I should share it with my radiology friends, however 🙂

LikeLike

Thanks Rama.

If it helps, my next piece might provide a bit of relief for our medical colleagues 🙂

LikeLiked by 1 person

Hi Luke. Thanks for the series. I’m a fellow Australian doctor who has research interests in machine learning but not in the automation or radiology area.

Interesting series and thanks for taking the time to write it. I have some slightly different thoughts about AI and its ability to disrupt.

I think most medico’s are not prepared for AI. The scope and extent to which AI will influence medicine and the speed that it will happen will shock most doctors.

I think the risk to radiologists and pathologists as stated by Hinton is pretty spot on and I think most doctors are in denial about it.

Even if the theory and methods of AI were to remain similar to what they are today, I think within 10 years we are looking at major changes in the workflow of radiologists that will likely reduce the need of radiologists by 25-50%. I think this is the most optimistic scenario. I think the more likely scenario is that the technology will evolve far quicker. If we look at ImageNET competition in the 2010’s and how image recognition accuracy went from 70% to almost 100% – that gives an idea of the rapidity of the technological gains.Neural nets was just one aspect of ML. When you think about the exponential increase in funding for ML coupled with newer theoretical aspects of ML like meta-learning I think it reflects just how quick things are moving.

I think the biggest aspects to delay AI adoption will actually be the colleges pushing back to protect themselves in the western world. The game changer will be when second and third world countries with limited radiologists aggressively adopt the AI and prove them to be superior in clinical trials. I think once this happens, it would be all but unethical for the western world not to adopt what will then be the gold standard. I would be surprised if this didn’t happen within 20 years.

Does this mean the end of the road for Medicos?

No. My take on which specialties are safer differs to yours in part.

I think with the advent of AI systems, a lot of internal medicine specialties are at risk – endocrinology comes to mind as potentially the most at risk. In fact endocrinology could partially be replaced today by tree diagrams. Medicine will devolve and evolve. My thoughts are that there will be fewer specialists – those that remain will have very subspecialised knowledge.

We will move back towards a generalist model who rely on AI for specialist knowledge. GP’s will evolve but will be fairly safe in the medium term – primarily because they do a lot of small procedures, paper work like medical certificates and counselling. Interventionalist’s will also be fairly safe predicated on robotics not advancing as rapidly as AI. I do feel like when robotics matures enough, it will rapidly overtake interventionists. I also think when it does happen it won’t look like Da Vinci.

Ultimately psychiatry and palliative care will be the safest specialties. At their core they are about human communication and rapport. Until society endows the same trust in AI and robotics as a human, these specialties will be very safe. This will take generations.

Talking with my medical colleagues, I feel like few people understand the rapid progress in AI and what it is capable of now and in the future

I think I will end in referencing Alpha Go Game 2, move 37 against Lee Sedol. I think that is what is coming to medicine and when it does it will be beautiful.

LikeLike

I really feel like automation and robotics is going to be adopted in a very assymetrical way across the healthcare industry. It’s relatively easy to see how robotics might be a better option for certain kinds of medical procedures, while being all but useless in prioritizing specific personal concerns with the best medical practices and what’s practically possible.

LikeLike